Why Docker != Containers and Docker OSS != Docker Inc.

You try to convey a concept but it’s only when something (else) happens that people have their “Aha moment”.

Last year VMware introduced a project called Bonneville that later became vSphere Integrated Containers.

Having recently moved to the VMware Cloud Native Application Business Unit, working on Bonneville and VIC has been one item of my charter.

If you didn’t bother to check the blog posts linked above, in a nutshell, vSphere Integrated Containers is a technology that allows you to provision a VM while maintaining the Docker experience (API/CLI, images format, public registry, etc). The advantage of doing this, in short, is that developers win (as they “want Docker”) and IT wins (because most IT shops have standardized operations around VMs).

This is often a hard concept to grasp because of a core fundamental misconception that pervades the industry: Docker = containers.

This is about to change.

Enter unikernels.

From this point on, everything I am writing in this post is pure personal opinions and have nothing to do with my professional role (just as a reminder).

A few days ago Unikernel Systems announced they were joining Docker (Inc.). There is a short video that talks about it here.

During the video there are a few gems.

This notion that “the ability to use the same Docker tools, which have been widely adopted with developers, will rapidly accelerate unikernels adoption” is very important in the context of understanding that Docker != containers.

One could go as far as paraphrasing that into (in the context of Bonneville): “The ability to use the same Docker tools, which have been widely adopted with developers, will allow users to leverage the traditional VM construct IT has standardized on”.

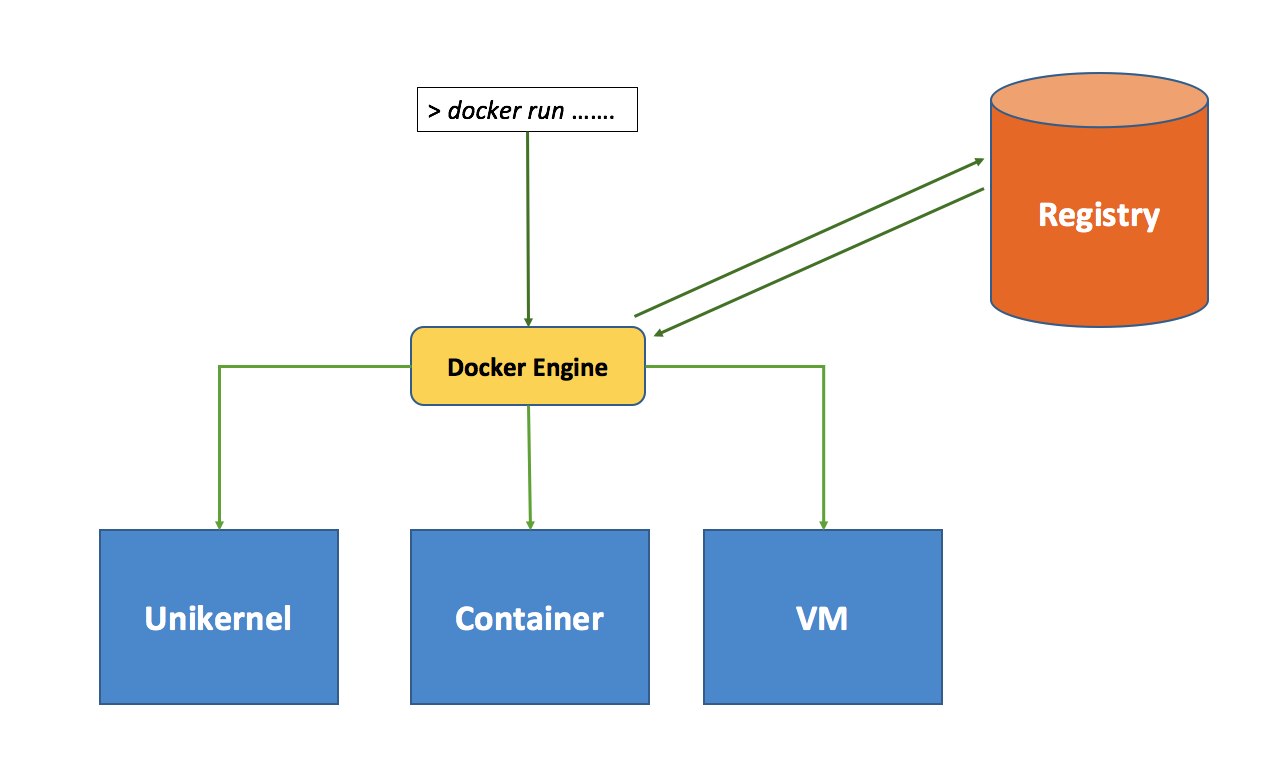

Or put it in another way picture (a somewhat simplify view):

You could, potentially, choose the right tool back-end (be it a Unikernel, a container or a VM) for the right job without disrupting the front-end experience.

This is a very powerful concept.

By the way, it is important to understand, in the picture above, that that VM doesn’t represent the VM on top of which you run an OS which can then run a container. In other words we are not talking about whether you should instantiate containers on a bare metal OS or on a OS running in a VM.

What I am showing in the picture above is a VM instantiated by Docker (that’s what the vSphere Integrated Container technology does).

If you want to go deep, I suggest you watch Ben Corrie’s session on Bonneville at QCon last year. It’s well worth the investment of 50 minutes of your time.

Back to unikernels now.

There have been a few very deep technical rants on the blogosphere about why unikernels are unfit for production (which is a somewhat relative statement given that, depending on who you ask, you may hear that VMs aren’t fit for production; not sure where this would leave containers honestly).

There have also been quite a few online discussions on the topic one of which is this very lengthy thread on Hacker News.

What stood out for me from this sea of comments were the statements that Solomon Hykes (Founder and CTO of Docker) made:

"Computers do run only one unikernel at a time. It’s just that sometimes they are virtual computers. Remember that virtualization is increasingly hardware-assisted, and the software parts are mature. So for many use cases it’s reasonable to separate concerns and just assume that VMs are just a special type of computer.

For the remaining use cases where hypervisors are not secure enough, use physical computers instead.

For the remaining use cases where the overhead of 1 hypervisor per physical computer is not acceptable, build unikernels against a bare metal target instead. (the tooling for this still has ways to go)."

I will candidly admit that I am not 100% sure about what he meant by that. Sometimes the nomenclature we all use to refer to things in an IT stack change and this isn’t helping. If you consider also that we are at the early stages and there is a physiological “mess” around the alternatives at our disposal…. well you get the picture.

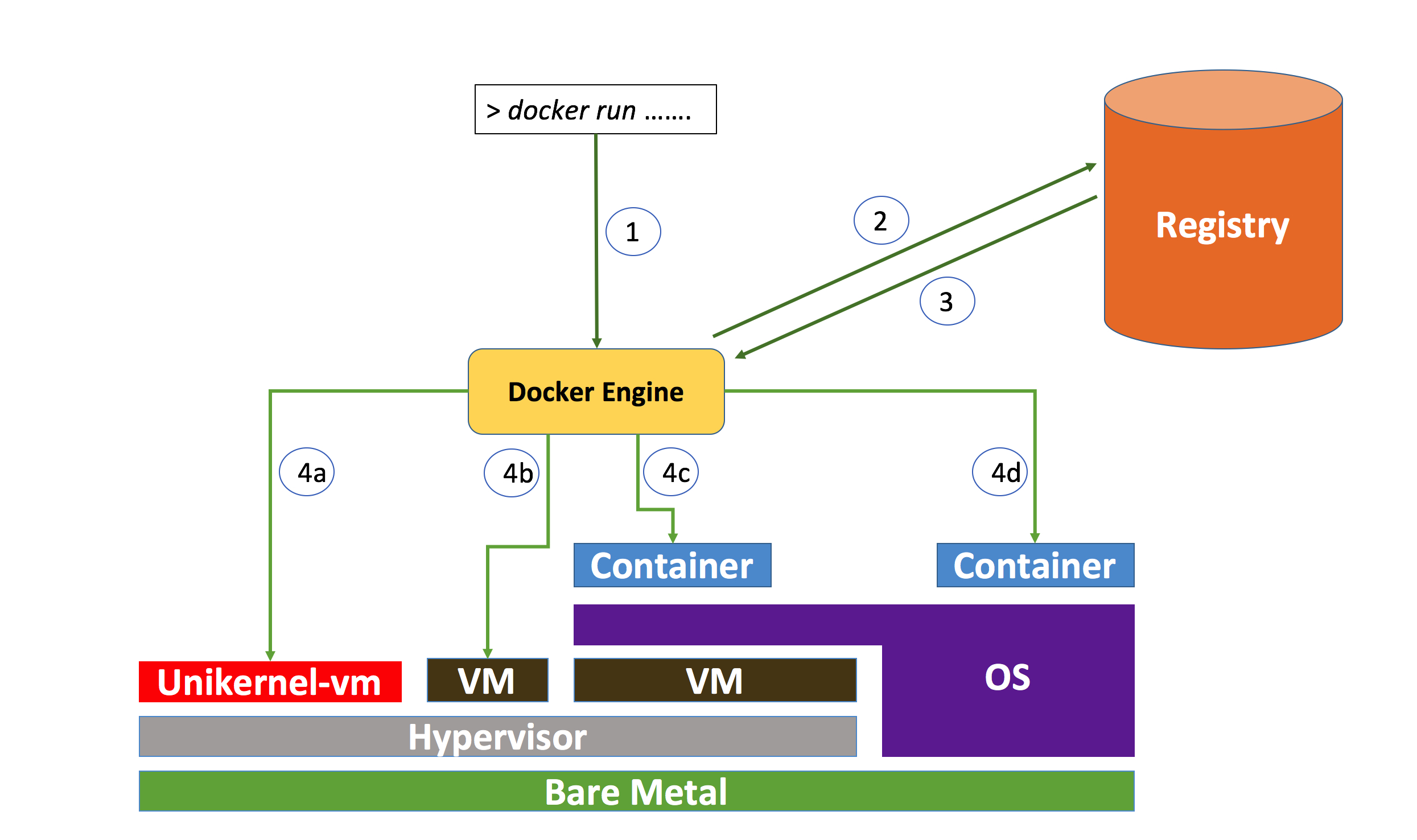

What I brought home from these statements is that Docker wants to offer options. Be them containers on bare metal, containers on VMs on hypervisors, Unikernels on hypervisors etc.

I see VIC (which I’d refer to as “containerVMs” on hypervisors) as yet another one of those options that Solomon is alluding to.

So a more detailed picture of the alternatives that are panning out for the future should, potentially, look like this:

The fact that Docker bought Unikernel Systems was a big wake up call for many and, if nothing, it’s going to be a great educational moment for the industry as a whole.

This is however coming at the cost of adding additional confusion though:

I said this is a good thing because it gives people the option of picking up the back-end that is most fit for their use case. However, unikernels would have existed anyway regardless of Docker acquiring the company behind them. In other words, a user could have picked unikernels anyway and be done with it.

The cynic person in me suggests that we should see this through the lenses of Docker Inc., the commercial entity behind the Docker OSS (Open Source Software) project.

Docker Inc. has (rightly so) all the interests to remain “top of stack”. As much as they can be “the interface people see and talk to” they are in a strong spot.

They are trying to establish a “control point”. This isn’t per se a bad concept and it’s something that any commercial company is incentivized to achieve as a way to remain differentiated.

I have never come across a commercial company whose strategy was to live in the lowest level of the stack waiting to be commoditized by the technology that could easily sit on top of it. There are well known industry practices and patterns around this.

Now imagine if Docker wasn’t to acquire (and double down on) Unikernels Systems.

If unikernels were to be successful in the future we’d see two separate and parallel stacks (a Docker/container stack and a unikernel stack) with potentially different north-bound interfaces.

In which case there would be a third party (e.g. Kubernetes), agnostic to the back-end run-time and their interfaces, that would provide the single entry point to deploy onto both stacks. This would, as a result, commoditize Docker (both Docker the OSS project and Docker Inc. the commercial entity).

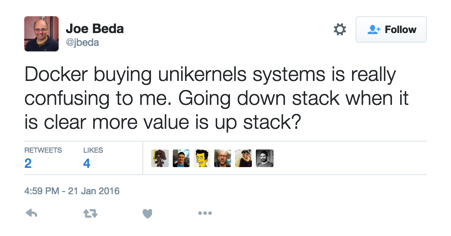

Joe is right when he says that the value is up in the stack but, I think, you need to establish a proper and solid control point before you can exploit and take advantage of it up in the stack.

Now that Docker != containers is hopefully a bit more clear than before, there is another key concept that people will need to familiarize with in the future to exactly understand maneuvers like this.

This other key concept is: Docker OSS != Docker Inc.

This “friction” is already evident among companies and projects like Kubernetes, Mesos and Docker (Inc.). They (and many others) are all “fighting” (commercially) for being that “entry point”.

As a matter of fact, while everyone is standardizing on and using Docker (OSS), Docker Inc. is “just” one of those companies that are trying to build a business model around it.

Docker Inc. has an (obvious) advantage but it is important to keep in mind that Docker OSS != Docker Inc.

In conclusion, just to make it very clear, I want to call out that I am not trying to picture Docker Inc. as the devil.

They are a great company with an ethic that I like (a lot) and they are doing what ALL commercial companies (including the one I work for) are supposed to do: generating profits.

Massimo.