Azure Virtual Machines: what sort of cloud beast is it? (UPDATED)

A few weeks ago, at TechEd, Microsoft announced Azure Virtual Machines. In other words their response to a growing sentiment that PaaS is too early for many and IaaS is the natural first step into the cloud (let's put SaaS aside for a second). Yes, I am over-simplifying just to avoid a ten pages blog post this time but it does look like Google is on the same path here.

At TechEd what drew my attention were not the dozens of meaningless Microsoft Vs VMware marketing comparison tables in the general sessions. Instead what drew my attention was an overview session on Azure Virtual Machines done by the always great Mark Russinovich. While I work for VMware, I do have a tremendous amount of respect for what Microsoft has done there and I want to congratulate with them and Mark particularly for the achievement. Building things like these isn't trivial.

It's an interesting presentation and I strongly suggest you take a look. For those of you that are not familiar with the "traditional" Azure (PaaS) you may also want to have a look at this as a piece of background.

From this Azure IaaS presentation I was particularly intrigued by the HA (High Availability) part of it. This is from around minute 23 to approximately minute 36. In general Mark was describing how this IaaS part of Azure is different from the more traditional and original PaaS part of Azure. In fact, while the latter is more geared towards new generation scale out applications, the former is going to be more geared towards the traditional applications model where the infrastructure is assumed to be reliable and resilient. Some refer to these models as design for fail clouds Vs enterprise clouds. I wrote about this concept in the past and I like to refer to them as UDP Clouds Vs TCP Clouds.

I want to stress that I never try to imply one is better than the other. They are both viable and serve different purposes. It is of paramount importance that you understand what service you are buying though.

I have however to admit that, as I went through Mark's presentation, I was a bit confused. On one hand he was positioning this as an enterprise play, where durability and resiliency is built into the "TCP" cloud, but then some of the stuff looked a lot like a design for fail type of cloud (or "UDP" cloud).

Now onto the details. The first thing that Microsoft had to do to implement resiliency for this service was to ensure durability of the VHD files associated to the IaaS virtual machines. They decided not to reinvent the wheel and leverage the Azure Storage service as the repository where to host the VHD file. You would assume this implies some sort of "shared storage model" (albeit not in a traditional SAN/NAS sense) that allows the servers subsystem to become stateless and hence more resilient. I am referring to the usual things such as dynamic live relocation of a virtual machine from one host to another, automatic restart of virtual machines in case of host failures etc. This model is often (if not always) assumed to be at the core of an enterprise cloud where resiliency is built into the infrastructure layer.

To much of my surprise suddenly Mark starts talking about Failure Domains and Update Domains. These are traditional concepts in Azure that allow you to deploy your distributed design for fail PaaS application in a way that, whatever happens, you have at least one or more instances of your application up and running.

But wait a second. I thought we were talking about a different value proposition with Azure Virtual Machines where "uptime of your single server instance is 99.9%". Mark even made a comparison to Amazon where he underlined that this SLA isn't about a rack or a datacenter or an entire region but rather this SLA is for "your virtual machine". This led me (erroneously?) to think the model Microsoft have in mind is more of a "TCP" model Vs the Amazon "UDP" model.

Even more surprising is that he anticipated a scheduled downtime of any of your virtual machines for about 20 minutes a month to upgrade the hypervisor of the host where your virtual machine is running. I assume that hosting the VHD file on Azure Storage doesn't allow them to use LiveMigration to do a rolling update of the Hyper-V hosts. Otherwise why bringing the virtual machines down when you can easily shuffle them around on the other hosts in the cluster as you do a scheduled rolling upgrade of your hypervisors? This should be as easy as putting the host in some sort of maintenance mode.

If the above was surprising, the next is potentially a bit worrying if proven true. If Azure Virtual Machines can't do LiveMigration, can they do a host failover? Unfortunately Mark didn't touch on this point. I'll make a bold assumption and I'll say Azure won't failover your virtual machines should a physical server fail. After all, the focus this presentation had on the fault domains and update domains led me to think that they won't (failover). (See update below)

What are the implications of this? If Azure Virtual Machines is built on top of the traditional Azure PaaS architecture (as it seams) this means a lot of components are not redundant (wise decision for a design for fail type of cloud) so the likelihood that a component breaks is very high. Imagine if a TOR switch (which I understand is not redundant in Azure) was to fail. How many VMs would be down? How do these get recovered? Manually?

Mark was suggesting workarounds to that such as using SQL mirroring which is good and fine. But how would that be different from, say, AWS? In other words, how would this be different then a design for fail type of cloud where you are responsible for architecting for uptime?

I just want to make clear that I am not trying to bash Azure. Really. I am just trying to understand better what sort of beast it is. Months ago I did put Azure in the third column (design for fail) of my Magic Rectangle analysis because that's where Azure PaaS fits.

I am leaning towards believing that what Microsoft announced at TechEd is more of a design for fail IaaS cloud than it is an enterprise IaaS cloud but... I could be wrong. Perhaps someone, if not Mark, can chime in and set the record straight.

And once again this isn't to say the design for fail model is bad and the enterprise model is good. An airplane isn't any better than a bi-cycle (and viceversa). It really boils down to what you want to do: different things for different purposes.

Massimo. Update (26/6/2012): Mark reached out on twitter and this is what he said.

@mreferre We wanted to have something for single-instance machines, but features for design-for-fail IaaS apps as well.

@mreferre We definitely do automated host failover for IaaS and PaaS VMs. Not clear for AWS…

I am still unclear what type of failover this is given my understanding is that PaaS instances are stateless and boot off a local hard drive. If anyone from MS with a good knowledge of the Azure IaaS backstage wants to provide more details, you are welcome to post them in the comment section.

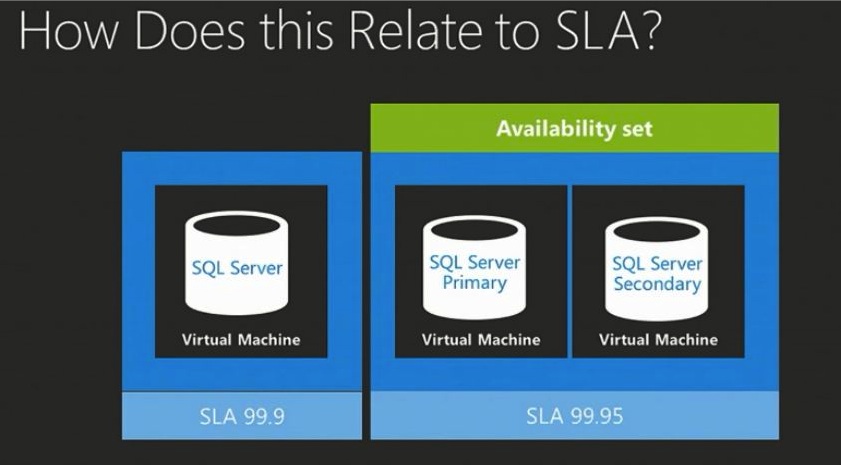

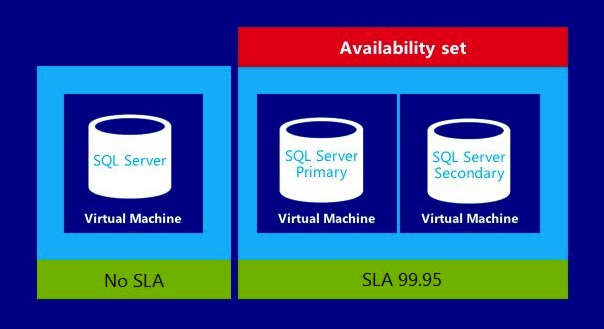

Update (12/11/2012): Marcel pinged me pointing to an interesting finding. Apparently Microsoft dropped the SLA for the single machine role. Marcel shows a couple of screenshot of the (supposedly) same slide took at different events.

The first screenshot refers to TechEd (June 2012) where they show the 99.9% availability for the single role instance. This is what I was discussing in this blog post.

The screenshot took at Build (October 2012) is “a bit different” and shows NO SLA for the single role instance.

Pictures attached for your convenience.

Teched (June 2012):

Build (October 2012):

I guess this explains a lot of things and a lot of doubts I originally shared in this post.